In this section, we study some of the deepest and most interesting parts of the theory of Markov chains, involving two different but complementary ideas: stationary distributions and limiting distributions. The theory of renewal processes plays a critical role.

As usual, our starting point is a (time homogeneous) Markov chain with countable state space and transition probability matrix .

Let and let . We will denote the number of visits to during the first time units by

Note that as , where

is the total number of visits to , one of the important random variables that we studied in the section on Transience and Recurrence. Next we denote the time of the visit to by

where as usual, we define . Note that is the time of the first visit to , which we denoted simply by in the section on Transience and Recurrence. Recall also the definition of the hitting probability:

Suppose that is recurrent and that . Use the Markov and time-homogeneity properties to argue that

Thus, is the sequence of arrival times and is the associated sequence of counting variables. The corresponding renewal function is

Note that as where is the potential matrix that we studied previously. This matrix gives the expected total number visits to starting at :

The limit theorems of renewal theory can now be used to explore the limiting behavior of the Markov chain. Let denote the mean return time to state , starting in . In the following three exercises, we assume again that is recurrent, and we interpret .

Use the strong law of large numbers for renewal theory to show that

Use the elementary renewal theorem to show that

Suppose in addition that is aperiodic. Use the renewal theorem to show that

Note that by the very definition of a recurrent state. Thus, when , Exercise 2 gives convergence with probability 1, and the limit in Exercise 2, Exercise 3, and Exercise 4 is . By contrast, we already know the corresponding limiting behavior when is transient, since the number of visit to is finite with probability 1, and the expected number of visits to is finite.

Suppose that is transient. Show that for every ,

On the other hand, the return time to is infinite with positive probability, by the very definition of a transient state. Thus , and thus, the results in parts (b) and (c) agree with Exercise 3 and Exercise 4, respectively. In particular, Exercise 3, which is our fundamental limiting result in many ways, holds for any states and .

Clearly there is a fundamental dichotomy in terms of the limiting behavior of the chain, depending on whether the mean return time to a given state is finite or infinite. Thus, state is said to be positive recurrent if .

Show that if is positive recurrent, then is recurrent. That is, note that if then .

On the other hand, it is possible to have , so that is recurrent, and also , so that is not positive recurrent. (A random variable can be finite with probability 1, but can have infinite mean.) In such a case, is said to be null recurrent. Note that if is positive recurrent, then the limits in Exercise 2, Exercise 3, and Exercise 4 are positive if leads to and 0 otherwise. If is null recurrent or transient, the limits in Exercise 2, Exercise 3, and Exercise 4 are 0.

Like recurrence/transience, and period, the null/positive recurrence property is a class property.

Show that if is positive recurrent and then is positive recurrent.

Thus, the terms positive recurrent and null recurrent can be applied to equivalence classes (under the to and from equivalence relation), as well as individual states. When the chain is irreducible, the terms can be applied to the chain as a whole.

Suppose that is a finite, closed set of states. Show that contains a positive recurrent state.

Suppose again that is a finite, closed set of states. Show that contains no null recurrent states.

Suppose that is a finite, closed, and irreducible set of states. Show that is a positive recurrent equivalence class.

In particular, a Markov chain with a finite state space cannot have null recurrent states; every state must be transient or positive recurrent.

Returning to the limiting behavior, suppose that the chain is irreducible, so that either all states are transient, all states are null recurrent, or all states are positive recurrent. From Exercise 3 and Exercise 4, if the chain is transient or if the chain is recurrent and aperiodic, then

Of course in the transient case and in the null recurrent, aperiodic case, the limit is 0. Only in the positive recurrent, aperiodic case is the limit positive; in this case, the chain is said to ergodic. In all of these cases, the limit is independent of the initial state . In the ergodic case, as we will see, has a limiting distribution as that is independent of the initial distribution.

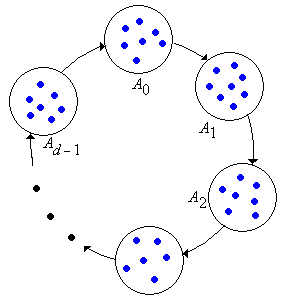

The behavior when the chain is periodic with period is a bit more complicated, but we can understand this behavior by considering the -step chain that has transition matrix . Essentially, this allows us to trade periodicity (one form of complexity) for reducibility (another form of complexity). Specifically, recall that the -step chain is aperiodic but has equivalence classes ; and these are the cyclic classes of original chain .

Note that every single step for the -step chain corresponds to steps for the original chain. Thus, show that the mean return time to state for the -step chain is .

Use the result of the previous exercise and the cyclic behavior of the chain to prove the following results, where the indices , , and are in :

Show that if the is null recurrent (or of course transient) then regardless of the period of , as for every .

Our next goal is to see how the limiting behavior is related to invariant distributions. Suppose that is a probability density function on the state space . Recall that is invariant for (and for the chain ) if . It follows immediately that for every . Thus, if has probability density function then so does for each , and hence is a sequence of identically distributed random variables.

Suppose that is left-invariant for , and let . If then defined by for is an invariant probability density function.

Suppose that is a nonnegative function on that is left-invariant for and satisfies . Show that

Note that if is transient or null recurrent, then . Thus, an invariant probability distribution must be concentrated on the positive recurrent states.

Suppose now that the chain is irreducible. If the chain is transient or null recurrent, then the only nonnegative functions that are left-invariant for are functions that satisfy and the function that is identically 0: for all . In particular, the chain does not have an invariant distribution. On the other hand, if the chain is positive recurrent, then for all and . Thus, the only possible invariant probability density function is the function given by for . Any other nonnegative function that is left-invariant for and has finite sum, is a multiple of (and indeed the multiple is sum of the values). Our next goal is to show that really is an invariant probability density function.

Suppose that is an irreducible, positive recurrent chain. Show that the function given by for is an invariant probability density function for .

In summary, an irreducible, positive recurrent Markov chain has a unique invariant probability density function given by for . We also now have a test for positive recurrence. An irreducible Markov chain is positive recurrent if and only if there exists a nonnegative function on that is left-invariant for , not identically 0, and satisfies (and then, of course, normalizing would give ).

Consider now a general Markov chain on . If has no positive recurrent states, then as noted earlier, there are no invariant distributions. Thus, suppose that has a collection of positive recurrent equivalence classes where is a nonempty, countable index set. The chain restricted to is irreducible and positive recurrent for each , and hence has a unique invariant probability density function on given by

Show that is an invariant probability density function for if and only if has the form for and where is a probability density function on the index set .

Consider again the general two-state chain on with transition probability matrix given below, where and are parameters with and .

Consider a Markov chain with state space and transition matrix given below:

Consider a Markov chain with state space and transition matrix given below:

Consider a Markov chain with state space and transition matrix given below:

Consider the Markov chain with state space and transition matrix given below:

Read the discussion of invariant distributions and limiting distributions in the Ehrenfest chains, and work the problems.

Read the discussion of invariant distributions and limiting distributions in the Bernoulli-Laplace chain, and work the problems.

Read the discussion of positive recurrence and invariant distributions for the reliability chains, and work the problems.

Read the discussion of positive recurrence and limiting distributions for the birth-death chain, and work the problems.

Read the discussion of positive recurrence and for the queuing chains, and work the problems.

Read the discussion of positive recurrence and limiting distributions for the random walks on graphs, and work the problems.