Suppose that we have a sequence of trials, each of which results in either success or failure. Our basic assumption is that if there have been consecutive successes, then the probability of success on the next trial is where . Whenever there is a failure, we start over, independently, with a new sequence of trials. Appropriately enough, is called the success function. Let denote the length of the run of successes after trials.

Argue that is a Markov chain with state space and transition probability function

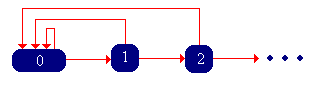

This Markov chain is called the success-runs chain. The state graph of is given below:

Now let denote the trial number of the first failure, starting with a fresh sequence of trials. Note that in the context of the success-runs chain , , the first return time to state 0, starting in 0. Note that takes values in , since, presumably, it is possible that no failure occurs. Let for , the probability of at least consecutive successes, starting with a fresh set of trials. Let for , the probability of exactly , consecutive successes, starting with a fresh set of trails.

Show that

Thus, the functions , , and give equivalent information. If we know one of the functions, we can construct the other two, and hence any of the functions can be used to define the success-runs chain.

The function is the reliability function associated with . Show that it is characterized by the following properties:

Show that the function is characterized by the following properties:

Essentially, is the probability density function of , except that it may be defective in the sense that the sum of its values may be less than 1. The leftover probability, of course, is the probability that . This is the critical consideration in the classification of the success-runs chain, which we consider in the next paragraph.

Verify that each of the following functions has the appropriate properties, and then find the other two functions:

The success-runs applet is a simulation of the success-runs chain based on Bernoulli trials. Run the simulation 1000 times for various values of , and note the limiting behavior of the chain.

From the state graph, show that the success-runs chain is irreducible and aperiodic.

Recall that has the same distribution as the first return time to 0 starting at state 0. Thus, the classification of the chain as recurrent or transient depends on . Specifically, the success-runs chain is transient if and recurrent if . Thus, we see that the chain is recurrent if and only if a failure is sure to occur. We can compute the parameter in terms of each of the three functions that define the chain.

Show that

When the success-runs chain is recurrent, we can define a related random process. Let denote the number of trials remaining until the next failure, after trials.

Argue that is a Markov chain with state space and transition probability function

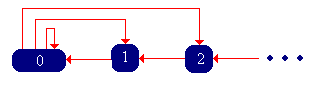

The Markov chain is called the remaining life chain. Verify the state graph below and show that this chain is also irreducible, aperiodic, and recurrent.

Compute and determine whether the success-runs chain is transient or recurrent for each of the cases in Exercise 5.

Run the simulation of the success-runs chain 1000 times for various values of , starting in state 0. Note the return times to state 0.

Let , the expected trial number of the first failure, starting with a fresh sequence of trials.

Show that the success-runs chain is positive recurrent if and only if , in which case the invariant distribution has probability density function given by

Show that

Suppose that , so that the remaining life chain is well-defined. Show that this chain is also positive recurrent if and only if , with the same invariant distribution as (with probability density function given in the previous exercise).

Determine whether the success-runs chain is transient, null recurrent, or positive recurrent for each of the cases in Exercise 5. If the chain is positive recurrent, find the invariant probability density function.

The success-runs chain corresponding to Bernoulli trials has a geometric distribution as the invariant distribution. Run the simulation of the success-runs chain 1000 times for various values of . Note the apparent convergence of the empirical distribution to the invariant distribution.

Suppose that , so that the success-runs chain and the remaining-life chain are positive recurrent.

Show that and are time reversals of each other, and use this fact to show again that is the invariant probability density function.

Run the simulation of the success-runs chain 1000 times for various values of , starting in state 0. If you imagine watching the simulation backwards in time, then you can see a simulation of the remaining life chain.