The Markov property, that the past and future are independent given the present, essentially treats the past and future symmetrically. However, there is a lack of symmetry in the fact that, in the usual formulation, we have an initial time 0, but not a terminal time. If we introduce a terminal time, then we can run the process backwards in time. In this section, we are interested in the following questions:

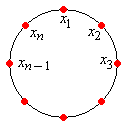

As usual, our starting point is a (time homogeneous) Markov chain with state space and transition probability matrix . Let be a positive integer, which we will think of as the terminal time. Define for . Thus, the process forward in time is while the process backwards in time in

Use basic conditional probability arguments and the Markov property for to show that for every positive integer and for every sequence of states ,

Since the right-hand side depends only on , , , and , the backwards process is a Markov chain, but is not time-homogeneous in general. Note however, that the backwards chain will be time homogeneous if has an invariant distribution. Thus, from now on, we will assume that our original chain is irreducible and positive recurrent with (unique) invariant probability density function .

Show that if has probability density function , then is a time-homogeneous Markov chain with transition probability matrix given by

Show that if does not have probability density function , but is aperiodic, then the expression in Exercise 1 converges to defined in Exercise 2 as .

Thus, we now define the time reverse of the chain to be the Markov chain with transition probability matrix defined in Exercise 2, regardless of the initial distribution of and without regard to a finite time horizon . The results in Exercise 1, Exercise 2, and Exercise 3 are motivation for this definition. Note that the fundamental relationship between the transition probability matrices and is

Note that

Show that for every sequence of states ,

Show that for every and ,

Show that

Suppose for a moment that is only assumed to be irreducible. Show that if there exists a probability density function and a transition probability matrix such that for all , then

Clearly, an interesting special case occurs when the transition probability matrix of the time-reversed chain turns out to be the same as the original transition probability matrix. A chain of this type could be used to model a physical process that is stochastically the same, forward or backward in time.

Suppose again that is an irreducible, positive recurrent Markov chain with transition probability matrix and invariant probability density function . The chain is said to be reversible if

Thus, is also the transition probability matrix of the time-reversed chain. Specifically, by Exercise 2, if has probability density function , then is the transition probability matrix of the chain backwards in time relative to any terminal time .

Show that is reversible if and only if for every sequence of states ,

Show is reversible if and only if for every and ,

The basic reversibility condition uniquely determines the invariant probability density function. Suppose for a moment that is only assumed to be irreducible. Show that if there exists a probability density function such that for all , then

If we have reason to believe that a Markov chain is reversible (based on modeling considerations, for example), then the condition in the previous exercise can be used to find the invariant probability density function . This procedure is often easier than using the definition of invariance directly.

The following exercise gives a condition for reversibility that does not directly reference the invariant distribution. The condition is known as the Kolmogorov cycle condition, and is named for Andrei Kolmogorov

Suppose that is irreducible and positive recurrent. Show that is reversible if and only if for every sequence of states ,

Note that the Kolmogorov cycle condition states that the probability of visiting states in sequence, starting in state is the same as the probability of visiting states in sequence, starting in state .

Recall the general two-state chain on with the transition probability matrix

where and are parameters with and . Show that is reversible and to use the basic reversibility condition to show once again that the invariant probability density function is .

Suppose that is a Markov chain on a finite state space with symmetric transition probability matrix . Thus for all . Show that is reversible and that the uniform distribution on is invariant.

Consider the Markov chain on with transition probability matrix given below:

Read the discussion of reversibility for the Ehrenfest chains, and work the problems.

Read the discussion of reversibility for the Bernoulli-Laplace chain, and work the problems.

Read the discussion of reversibility for the random walks on graphs, and work the problems.

Read the discussion of time reversal for the reliability chains, and work the problems.

Read the discussion of reversibility for the birth-death chains.